You run the same search query on ChatGPT. Same model, different accounts, all incognito. You get different answers every time.

If you've been testing Answer Engine Optimization (AEO), you've probably noticed this. It's disorienting, especially if you're used to SEO, where rank 1 on Google is roughly rank 1 for everyone. AI search doesn't work that way. Here's why, and what you can actually do about it.

What Is AEO, Quickly

AEO is the practice of structuring your content so AI tools cite your business when answering relevant questions.

Perplexity. Gemini. Claude. These tools are increasingly where buyers go before they visit a single website. Instead of a blue link on page 1, AEO puts your brand inside the answer itself.

The upside: that kind of visibility is high-trust. The downside: it's harder to measure, and it's not consistent across users or sessions. Understanding why is the first step to doing something about it.

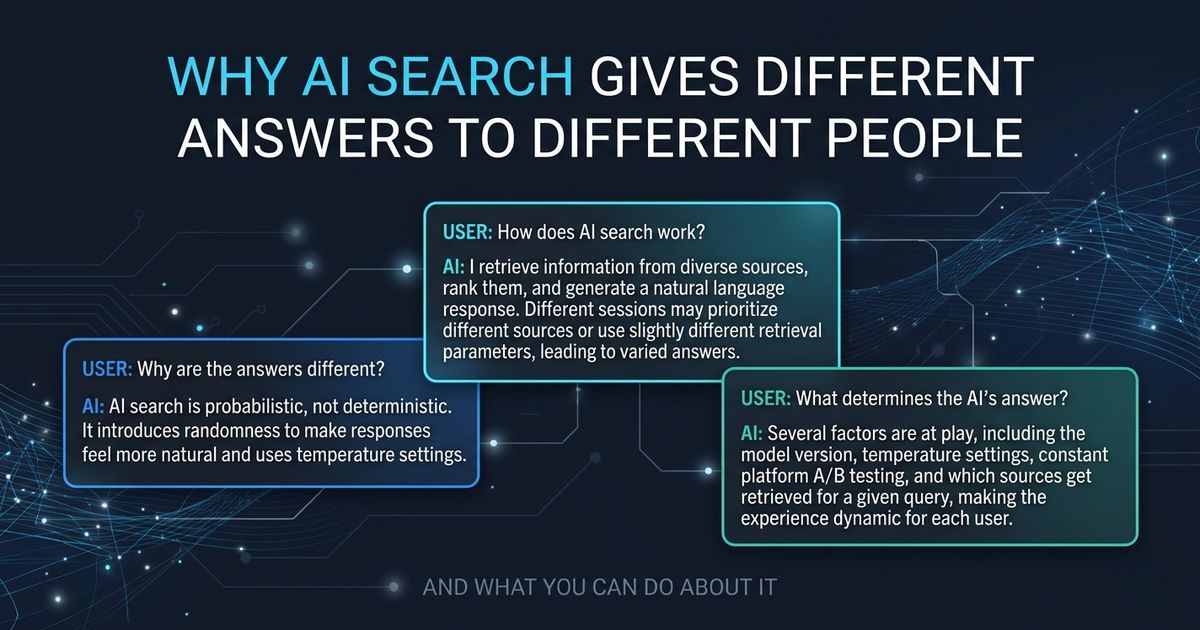

Why the Answers Are Different

1. LLMs are non-deterministic by design

Most language models run with a temperature setting above zero. Temperature controls randomness in how the response is worded. At zero, the model always picks the most likely next word. Above zero, it introduces variation to make responses feel more natural.

But temperature has less influence on which sources get cited than most people assume. The bigger variables are retrieval window size, how candidate sources get ranked before the model even sees them, and how the prompt gets orchestrated upstream. Change any of those and you get different citations, even with identical wording in your query and identical temperature settings.

2. Platforms A/B test continuously

ChatGPT, Perplexity, and others run constant experiments on their user base. Different accounts land in different test cohorts: different retrieval configs, different prompt templates, different ranking logic. You'd never know you're in an experiment. The platform looks identical from the outside.

3. The index isn't frozen

For AI tools with live web access (Perplexity especially), the underlying index updates constantly. Two queries minutes apart can pull from slightly different crawl snapshots. A page indexed this morning may surface differently than the same page indexed last week.

4. Account tier and context

Free accounts, paid accounts, and logged-in accounts sometimes route to different model versions, different tool access, or different context depths, even when the platform labels them identically. “GPT-4o” for a free user and “GPT-4o” for a paid user may not behave the same under the hood.

5. Context drift within a session

This one catches people off guard. If you've already asked several questions in a chat session before running your test query, the model's previous responses influence what it generates next. Earlier tokens in the conversation shift the probability distribution for everything that follows, including which sources get cited. A cold session and a warm session will often produce different answers to the same query, even on the same account, same model, same minute.

The Right Way to Think About This

Think of AI search like rolling weighted dice.

Your content doesn't guarantee the result. It shifts the probability that your answer comes up.

Stop trying to make AI cite you every single time. That's not achievable, and chasing it will produce bad strategy. Instead, think in citation rates.

Your goal is to increase the percentage of runs where AI tools cite your content for your target queries. Moving from a 10% citation rate to a 40% citation rate is a 4x improvement in AI search presence. That compounds fast as AI search volume grows.

It's similar to SEO in 2008. You couldn't guarantee rank 1 every time, but consistent signals made rank 1 far more probable over time. AEO works the same way. You're building probability, not locking in a position.

What Actually Improves Your Citation Rate

Write answers, not just articles

AI models skim for extractable answers. If your main point is buried after three paragraphs of context-setting, it gets skipped.

Lead with the answer. Put the core conclusion in your first one or two sentences. Then explain.

Before

“In the world of real estate, many factors affect property value, and one of the most significant is the government's valuation system...”

After

“Zonal value is the government's floor price for land per square meter, set by the BIR. It directly affects transfer taxes and capital gains tax when buying or selling property.”

The second version gets cited. The first doesn't.

Add definition anchors

LLMs look for clean, extractable definitions. A short “What is X” section structured as a direct answer, not buried inside a paragraph, dramatically increases the chance that section gets pulled into an AI response.

FAQ sections and definition pages in properly structured HTML get extracted at a higher rate than narrative prose. Add FAQ schema markup and Article schema to every page where it's relevant.

Build redundancy across sources

When the same facts about your business appear on multiple independent sources (your website, a directory listing, a third-party article), AI retrieval systems treat that as higher-confidence information. One source is a claim. Three independent sources that all resolve to the same entity start to look like a verified fact.

This is N-point verification working in your favor. The more retrieval sources that consistently describe your business the same way, the more confidently an AI model will cite you. Inconsistent or contradictory information across sources does the opposite: it introduces ambiguity that models resolve by citing someone else.

The practical implication: make sure your business name, location, and core services are described consistently and explicitly across every platform you publish on. Not just your website. LinkedIn, GitHub, directory profiles, guest posts: every mention is a data point the retrieval system can cross-reference.

This matters especially for Philippine businesses. Local content is thin. If you're the only structured, consistent source on a specific topic in a Philippine context, your citation rate will naturally be higher. The bar to become the default answer is lower here than in saturated markets.

Name your entities explicitly, and relate them

Write your brand name, location, and topic in close proximity. “Aaron Zara, a licensed real estate broker based in Santa Rosa, Laguna” is far more citable than “our lead broker.” AI needs clearly named entities to attribute correctly. Vague references get dropped.

Go one step further where you can: connect the entity to an attribute to a value in the same sentence. “REN.PH is a Philippine real estate data platform covering 60,000+ verified property listings and broker profiles” gives an AI model three things to work with: the entity (REN.PH), what it is (real estate data platform), and a specific verifiable fact (60,000+ records). That kind of sentence gets extracted and cited. A sentence that says “our platform has a lot of listings” does not.

Publish and update consistently

AI tools with live web search favor recently indexed content. A page last updated in 2022 competes poorly against one updated this month. Regular publishing, even minor updates, keeps your content fresh in the retrieval pool.

How to Measure Your Citation Rate

Since AI answers vary per session, a single check tells you nothing. You need a baseline built from repeated testing.

- Run the same query 10 times across separate sessions and count how many cite you. That's your baseline citation rate for that query.

- Test across platforms separately. Perplexity, ChatGPT, and Gemini behave differently and pull from different retrieval systems.

- Retest monthly. You're looking for a trend, not a snapshot.

The tooling for AEO tracking is still early. Most businesses haven't started measuring at all. That gap is the opportunity. Whoever establishes citation rate baselines now will have a meaningful head start when the tooling catches up and the practice becomes standard.

The Bottom Line

AI search is probabilistic, not deterministic. The variation you're seeing isn't a measurement error. It's the system working as designed.

You can't force consistency. You can make yourself the statistically likely answer.

Clean structure. Direct answers. Named entities. Fresh content. Corroborating sources across multiple domains. That's what moves the citation rate.

AEO isn't about gaming a single result. It's about building the kind of content AI systems naturally reach for when your topic comes up. Start measuring your citation rate. That's the only number that matters.

Aaron Zara builds Philippine data infrastructure for AI. Founder of Godmode Digital. Engineer behind REN.PH. Creator of the PSGC and LTS MCP servers.